Software delivery has always balanced speed and safety, but in 2026 that balance looks very different. AI is now writing code, generating tests, reviewing pull requests, and even suggesting infrastructure changes. The delivery pipeline is becoming partly autonomous. That brings real advantages in efficiency and scale – but it also introduces new, less predictable risks that teams need to manage carefully.

This guide walks through how to build a secure, AI-native DevSecOps pipeline that keeps pace with modern development without compromising trust.

1.The Shift to AI-Native Pipelines

Traditional DevSecOps was mainly about adding security tools into the CI/CD pipeline. Today, things have shifted – AI is now part of the entire development lifecycle, influencing how software is built, tested, and delivered.

- Code generation (LLM-assisted development)

- Automated reviews and refactoring

- Intelligent test creation

- Infrastructure-as-Code recommendations

The challenge is no longer just “shift left,” but “secure everywhere AI operates.”

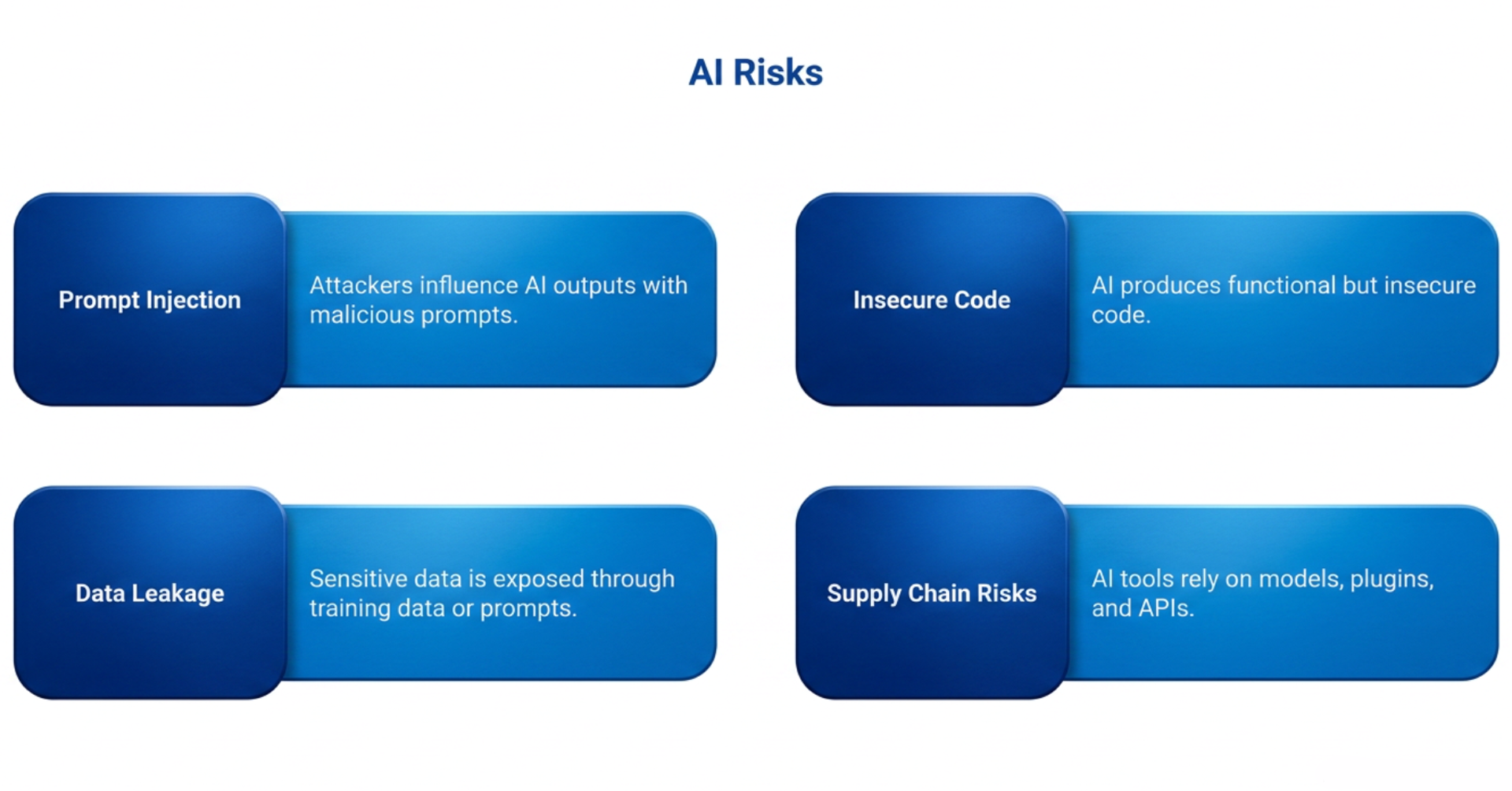

2. New Threat Landscape in 2026

AI introduces risks that classic pipelines weren’t designed for:

Prompt Injection & Model Manipulation

- Attackers can influence AI outputs by crafting malicious prompts or inputs.

Insecure AI-Generated Code

- AI can produce functional but insecure code (e.g., weak auth, improper validation).

Data Leakage

- Sensitive data may be exposed through training data, logs, or prompts.

Supply Chain Risks

- AI tools rely on models, plugins, APIs – each a potential attack vector.

3. Core Principles of AI-Native DevSecOps

To stay secure, pipelines must evolve around a few key principles:

Zero Trust for AI

- Treat AI outputs as untrusted input. Validate everything.

Continuous Verification

- Security checks must run at every stage—not just pre-deploy.

Observability + Explainability

- You need visibility into what AI is doing and why.

Human-in-the-Loop

- Critical decisions should still involve human approval.

4. Securing the AI Development Workflow

Secure Prompt Engineering

- Avoid exposing secrets in prompts

- Use templates with strict input validation

- Log and monitor prompt usage

AI Output Validation

- Run SAST/DAST on AI-generated code

- Enforce secure coding policies automatically

- Reject unsafe patterns (e.g., hardcoded credentials)

Policy-as-Code

- Define security rules in code

- Automatically enforce across pipeline stages

- Integrate with CI/CD tools

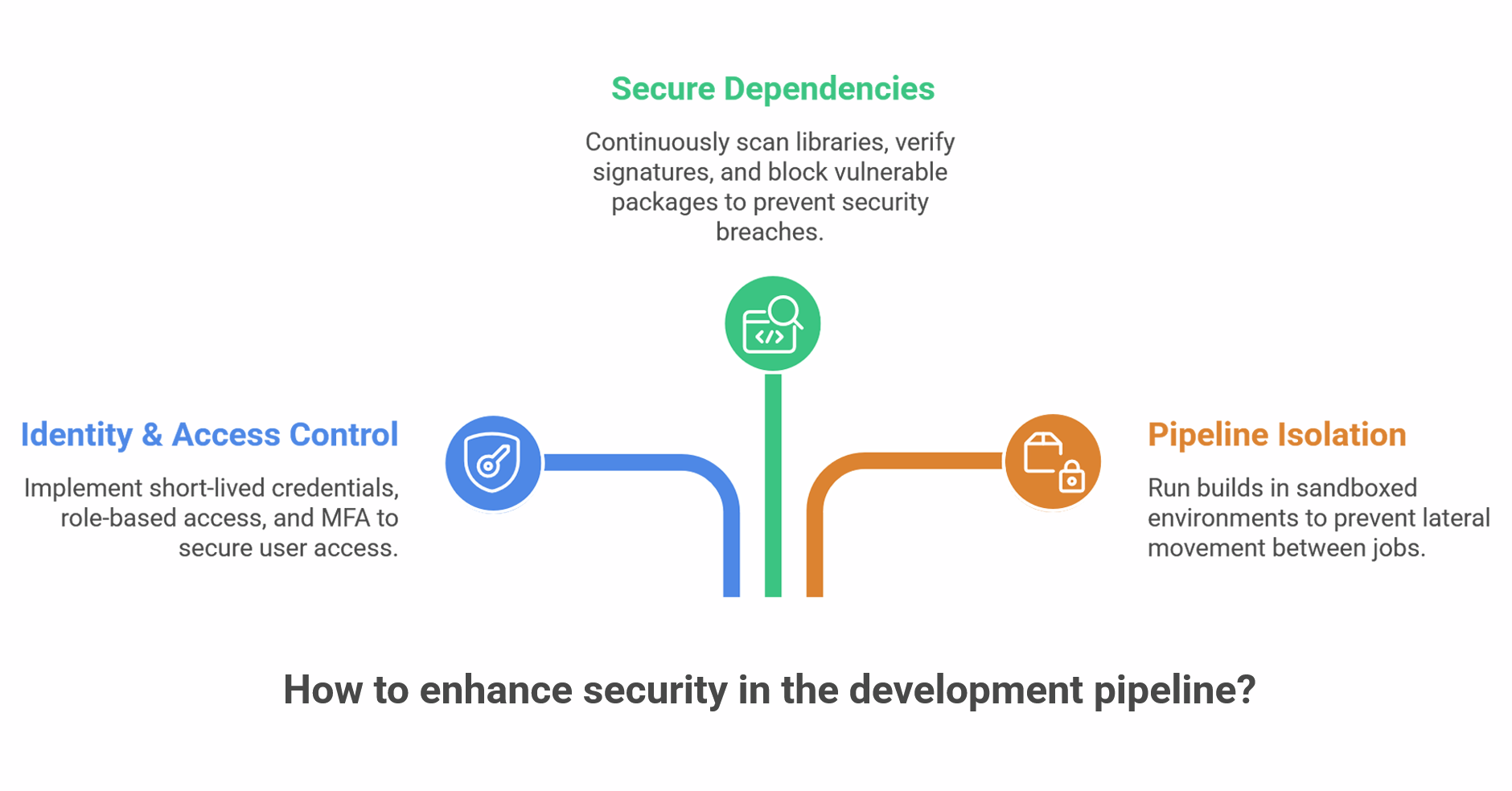

5. Strengthening the CI/CD Pipeline

Identity & Access Control

- Use short-lived credentials

- Implement role-based access

- Enforce MFA for critical actions

Secure Dependencies

- Scan open-source libraries continuously

- Verify signatures (SBOM usage)

- Block vulnerable packages automatically

Pipeline Isolation

- Run builds in sandboxed environments

- Prevent lateral movement between jobs

6. AI Model Security

AI models themselves must be protected:

- Model Integrity Checks – Ensure models are not tampered with

- Access Controls – Restrict who can use or modify models

- Versioning & Auditing – Track every change

- Adversarial Testing – Test models against malicious inputs

7. Real-Time Monitoring & Response

Security doesn’t stop at deployment.

Continuous Monitoring

- Track anomalies in code behavior

- Monitor AI-generated changes

- Detect unusual access patterns

Automated Incident Response

- Trigger alerts instantly

- Rollback unsafe deployments

- Use AI to assist in threat detection

8. Governance, Compliance & Auditability

With AI in the loop, compliance becomes more complex:

- Maintain audit trails for AI decisions

- Ensure data privacy (GDPR, etc.)

- Document AI usage policies

- Implement explainability for critical outputs

9. Best Practices Checklist

- Validate all AI-generated code

- Never expose secrets to AI tools

- Use SBOM and dependency scanning

- Enforce least-privilege access

- Monitor pipeline activity continuously

- Keep humans in critical approval loops

- Regularly test AI systems for vulnerabilities

10. The Road Ahead

AI-native DevSecOps isn’t just an upgrade—it’s a mindset shift. Security must evolve from static controls to

Organizations that succeed will be those that:

- Embrace automation without blind trust

- Combine AI speed with human judgment

- Build pipelines that are secure by design

Conclusion

By 2026, the pipeline is no longer just a delivery mechanism – it has evolved into a dynamic, AI-driven system. Securing it means rethinking how we place trust in code, the tools we use, and even the machines that build them.

The objective isn’t to slow innovation, but to make sure speed and security progress together in a safe and reliable way.